As 2016 dawned over CES in Las Vegas, Nev., and now DesignCon in Santa Clara, Calif., it’s becoming clear that accelerating design cycles and exponentially increasing complexity is putting enormous pressure on both designers and test-equipment vendors to step back and rethink their test options to meet current and future challenges.

Some of these newer options, such as flexible test “platforms” and more emphasis on “big data”-type analysis may serve not only to accelerate test, but also provide feedback and trend analysis to improve the actual design during the prototyping stages.

CES’s emphasis on multimedia entertainment, home automation, the Internet of Things (IoT), automotive design, and virtual reality made for an enjoyable festival of technological ideation. On the dark side, it revealed the rate of change now facing designers. While design tools have been evolving to accommodate those rapid cycles, test tools and techniques have yet to keep pace to address, for example, a multiplicity of wireless air interfaces. And more are on the way, including 5G.

“Test has become a higher percentage of the cost of producing a device,” says Eric Starkloff, executive vice president of global sales at National Instruments. It’s not just the hardware at issue, but it’s increasingly software test, too. Speaking specifically to automotive, “We need a test system that evolves with each generation,” but the idea applies across the consumer, automotive, industrial, and, of course, high-speed systems.

Achieving this flexibility requires both more automation as well as a more modular approach. On top of that, test equipment needs flexible front ends to enable the development of what Starkloff calls a “universal test platform.”

Another dynamic at play calls for such flexibility: The deployment of standards before test compliance has even been defined (e.g., USB 3.1 Gen2 Type-C), as well as developing new standards that require lots of research and prototyping of ideas and concepts before defining such standards (e.g., 5G wireless).

In the case of full-on USB 3.1 Gen 2 (SuperSpeed+) Type-C, the requirements have been laid out. However, compliance procedures have yet to established for the physical connection, which leaves test-equipment vendors and designers in a bit of a quandary.

Granted, the USB Implementers Forum Inc. (USB-IF) and test-equipment companies like Keysight Technologies and Teledyne LeCroy are doing all they can to simplify testing of USB 3.1 interfaces. Keysight developed the U7243B USB 3.1 Compliance Test Software for its Infiniium oscilloscope line, while Teledyne LeCroy has its USB 3.1 test suite (Fig. 1).

With the Test Suite, an automated physical-layer test package, QPHY-USB3.1-Tx-Rx, covers both transmitter and receiver test, while the M310C protocol analyzer can test the link layer for protocol compliance. Cables and connectors can be characterized using the SPARQ Series Network Analyzer for S-parameter and TDR testing.

Both Keysight and Teledyne LeCroy use the USB Interface Forum (USB-IF) SigTest Tool software, now on version 4.0.19 as of December 14, 2015. The tool is the official tool for SuperSpeed USB transmitter voltage, LFPS, and Signal Quality electrical-compliance testing as well as for calibrating SuperSpeed receiver test solutions. SigTest is designed to be used with the SuperSpeed electrical test fixture, which is available in the USB-IF eStore.

In September last year, Keysight also announced its N7015A Type-C High-Speed Test Fixture, which the company showcased in its booth at DesignCon 2016 (Fig. 2). The N7015A USB Type-C test fixture has a de-embeddable bandwidth of 30 GHz, enabling signal verification and debug of USB 3.1 10-Gb/s designs and other high-speed signal standards to support Type-C connectors. The fixture separates out four lanes of high-speed protocol signals for signal measurement or injection. It also sends low-speed power- and control-line signals to a secondary fixture, the N7016A.

Given that USB 3.1 Gen2 Type-C is the cutting-edge of connector and interface design, it’s not too surprising that compliance test procedures have yet to be worked out. In the meantime, the software-based approach to test using highly flexible equipment as the foundation is keeping product development moving.

Ethereal 5G Requires Flexible Test Beds

While USB 3.1 Gen2 Type-C interface development, test, and compliance continue to evolve to help designers meet the specification, at least test-equipment developers have a standard to use as a reference. That’s not the case with 5G, which is far from defined. Still, vendors must find a way to prepare for what is sure to be the most complex and demanding wireless interface ever developed.

One of the main concerns at the moment is deciding on the modulation scheme. While OFDM, with its high spectral efficiency and tolerance of multipath noise, has held sway for the past 15 years in wireless LANs (Wi-Fi) and LTE cellular, it suffers from a high peak-to-average power ratio (PAPR). As a result, newer schemes are being researched that will avoid the high PAPR while also providing even greater spectral efficiency.

Cohere Technologies offered up its suggestion for an orthogonal frequency time space (OFTS) scheme at the 3GPP RAN Workshop in September. Others are being developed in direct collaboration with test-equipment and software suppliers, such as Keysight and National Instruments (NI).

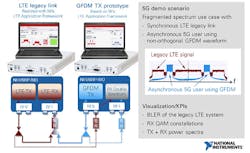

NI, for example, is working with researchers to demonstrate generalized frequency division multiplex (GFDM) as a potential 5G waveform. For Mobile World Congress 2015, it worked with the 5GNOW project and 5G Lab in Germany to demonstrate GFDM for asynchronous use of fragmented spectrum in coexistence with a real-time legacy LTE link (Fig. 3).

From NI’s point of view, it was a good example of how LabView Communications System Design Suite, Application Frameworks, and flexible software-defined-radio hardware could be used to accelerate the validation of new 5G waveforms through real-world prototyping.

Big Data and Leasing Reduce Test Cost

At last year’s NIWeek, it was clear that big-data analysis is starting to play a major role in prototyping and development, as well as identifying long-term trends. According to Starkloff, NI is uniquely positioned to help developers with test data analysis as it has a more software-centric view of the world. He adds that “those other boxes are automated through our systems.”

NI has partnered with Optimal+ for big-data analysis, and in 2016 it envisions being able to do more edge analytics, where a certain amount of data is analyzed locally for data reduction. The rest is sent to Optimal+, which can apply meta-data analysis from multiple clients to help each individual client spot trends quicker and reduce the total number of tests required, all while lowering the number of potential escapes.

Along with looking more closely at the use and application of test data to reduce test cost and time, many companies may be well advised to look at test-equipment leasing—versus buying it outright. Electronic Design will be looking more closely at leasing options in up-coming articles. Suffice to say for now that test equipment currently is actually used for only 15% to 20% of its lifespan, according to George Acris, director of marketing for Microlease (Europe).

With accelerating design cycles, a company really has to ask itself if it’s in the test-equipment calibration, storage, and maintenance business, or the product-development business, and decide where to invest its differentiation dollars. Leasing has many upsides, and test-equipment vendors are also recognizing the benefits of partnering with companies like Microlease.

Of course, cost can be reduced in other ways, such as through the use of USB-based test devices or by opting for app-based test options. While the latter may not yet qualify for serious mainstream use, we will continue to monitor them as they improve—as much for fun as for potential professional use. We’ll see how the competition stacks up for Oscium, which led the way.

Also in 2016, we will continue to track the movement toward modular test equipment, even for benchtops, as the flexibility for a wide range of applications and test scenarios becomes critical, with a multiplicity of wireless interfaces being just one reason.

And last, but not least, is the humble digital multimeter (DMM). It’s come a long way, and with the emergence of portable oscilloscopes and logic analyzers, it’s almost redundant—except for the fact that DMMs are still a whole lot cheaper and a great “bang for the buck.” Leasing may take hold of big boxes, but you’ll have to pry my DMM out of my cold, dead hands.