Ever since the first commercial introduction of a General Packet Radio Service (GPRS) system in 2000, consumers and carriers alike have been clamoring for higher data rates in mobile networks. When one investigates the evolution from GPRS (largely considered “2.5G”) to LTE-Advanced (4G), the formula for producing higher data rates seems fairly straightforward.

In fact, the combination of wider channel bandwidths (200 kHz to 100 MHz), higher-order modulation schemes (Gaussian minimum shift keying or GMSK to 64-state quadrature amplitude modulation or 64QAM), and multiple-input multiple-output (MIMO) technology (single-input single-output or SISO to 8x8 MIMO) enables modern cellular networks such as LTE-Advanced to achieve massively higher data rates than the first GPRS systems (56 kbits/s to 3 Gbits/s).

Related Articles

- A 5G Wireless Cell Phone Is In Your Future

- Waiting For 5G: Making Do With LTE-Advanced

- Are You Ready For 5G Wireless?

Going forward, the desire to further increase data rates in cellular communications networks is one of the biggest motivations behind today’s ongoing 5G research. Deployment of 5G networks will likely occur well into the future (think 2020), but engineers are researching 5G technologies today.

Evolution Of The Network Architecture

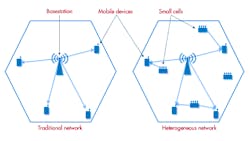

Although 5G cellular networks likely will incorporate a myriad of new technologies such as millimeter-wave radios, massive MIMO, and new physical layers, it is important to understand these innovations in the context of evolving network architecture. Historically, the cellular network consisted of a single basestation serving a large number of users. Today, however, the architecture of modern cellular networks is increasingly heterogeneous (Fig. 1).

In these heterogeneous networks, known as “hetnets,” traditional basestations are often augmented with a large number of small cells such as femtocells and picocells. Small cells are effectively miniature basestations that can be used to improve coverage in challenging environments and increase the data capacity of the network. In the long term, cellular networks likely will use an increasing number of small cells. In addition, improved Wi-Fi radios known as “carrier-grade” Wi-Fi can potentially augment cellular networks with additional data capacity.

These evolutions in network topology are already happening. Ultimately, engineers can consider technologies once deemed impractical for cellular communications. For example, with the shrinking cell sizes offered by small cells, engineers now can consider higher frequency ranges once thought not to be viable due to concerns over signal propagation distance.

This file type includes high resolution graphics and schematics when applicable.

Millimeter Wave

Today, most current cellular networks operate in relatively narrow licensed bands below 2 GHz, where signals propagate reasonably far through free space but where available spectrum is somewhat limited. Unfortunately, the bandwidth of available spectrum has a direct impact on the maximum data rate of the transmissions in these bands. According to the well-known Shannon-Hartley Theorem, capacity is a linear function of bandwidth:

Capacity = bandwidth ✕ log2(1 + signal-to-noise ratio)

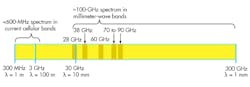

Given the spectrum limitations below 2 GHz, researchers are exploring new frequency ranges that can support substantially larger capacity. Some of the most promising bands for future cellular networks are the millimeter-wave bands at 28 GHz, 38 GHz, and 72 GHz.

The term “millimeter wave” generally describes frequencies that have a wavelength between 10 mm and 1 mm: 30 GHz to 300 GHz. Millimeter-wave bands offer substantially wider bandwidths than today’s current cellular bands (Fig. 2). As a result, transmissions in the millimeter-wave bands promise dramatically higher data capacity than the current cellular bands used today.

Massive MIMO

Over the past decade, MIMO technology has offered the opportunity to significantly increase channel capacity beyond what the Shannon-Hartley Theorem theoretically “allowed” through the use of multiple antennas. The basic premise of MIMO is that through the use of multiple antennas, a span of allocated spectrum can support multiple data streams. Although each data stream is transmitted at the same time and at the same frequency and would seem to interfere with other data streams, the receiver’s use of multiple antennas enables the receiver to divide the transmissions into separate data streams.

Today, several cellular communications standards allow the use of MIMO to improve channel capacity. Starting in 2007 with 3GPP Release 7, HSPA+ supported the use of up to 2x2 MIMO for downlink transmissions. In addition, the LTE standard, introduced with 3GPP Release 8 in 2008, allowed for up to 8x8 MIMO.

The use of MIMO technology in today’s wireless standards has grown. Moreover, the next generation of cellular communications systems will likely use MIMO systems with substantially larger numbers of antenna elements—hence the term “massive MIMO.”

For example, researchers at Lund University are using software defined radio (SDR) systems to prototype one of the first massive MIMO communications links with more than 100 antenna elements (LUND University Massive MIMO). Not only does the use of more antenna elements enable higher data rates, it also will allow future basestations to better focus downlink energy to a specific wireless device through beamforming.

New Physical Layers

A third area of research in 5G communications is the evolution of the physical layer. Historically, the evolution of the cellular physical layer allowed for higher data rates through the use of higher-order modulation schemes and more sophisticated signal structures. For instance, the progression of GSM/EDGE to UMTS introduced code division multiple access (CDMA) technology. In addition, the progression to LTE involved the introduction of orthogonal frequency multiple access (OFDM) and single-carrier frequency division multiple access (SC-FDMA).

Similarly, 5G researchers are continuing to investigate new and more efficient signal structures. For example, generalized frequency division multiplexing (GFDM) uses special filtering to improve interference into adjacent channels versus traditional OFDM. In addition, technologies such as non-orthogonal multiple access (NOMA) multiplex use the power domain of a transmission for better spectrum utilization. GFDM and NOMA are two of several areas of research into new signal structures designed to enable higher capacity into a given span of spectrum.

Conclusion

As both a student and consumer of today’s wireless technologies, I am tremendously excited about the technologies that will likely be part of a fifth-generation cellular standard. If current research efforts in key areas such as millimeter wave and massive MIMO prove successful, both the design and test of wireless communications systems will never be the same.

In the past, engineers frequently relied on software simulations to test new ideas for communications systems. Today, however, the combination of high-performance graphical software such as NI LabVIEW system design software and flexible SDR prototyping platforms is changing the way engineers test new ideas. For more information on how National Instruments is involved in the design and test of 5G communications, visit www.ni.com/5g.

David A. Hall is a senior product marketing manager at National Instruments, where he is responsible for RF and wireless test hardware and software products. His job functions include educating customers on RF test techniques, product management, and developing product demos. His areas of expertise include instrumentation architecture, digital signal processing, and test techniques for cellular and wireless connectivity devices. He holds a bachelor’s degree with honors in computer engineering from Penn State University.

About the Author

David Hall

Head of Semiconductor Marketing

David A. Hall is the head of semiconductor marketing at NI and is responsible for developing and executing go-to-market plans for the semiconductor industry. His job functions include managing the semiconductor test business, identifying industry trends, and educating customers on best semiconductor test practices. Hall’s areas of expertise include ATE architectures, RF measurement techniques, digital signal processing, and best measurement practices for mobile and wireless connectivity devices.

With nearly 15 years of experience at NI, Hall has served in multiple roles throughout his career including applications engineering, product management, and product marketing for automated test and RF instruments. He has also held management positions in product marketing, which focused on employee development and meeting business results across products and application areas. Hall is a known expert on subjects such as 5G, the Internet of Things (IoT), autonomous vehicles, and software-defined instrumentation. He holds a Bachelor’s with honors in computer engineering from Penn State University.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: