Compression Requires The Right Tool For The Job

When my son Adam was four years old, we built a series of rubber band guns that he designed. During these projects, Adam really started understanding the difference between a screwdriver and a chisel and between a jigsaw and an electric drill. As he got older and the complexity of our projects increased, I encouraged him to use the right tool for the job. In other words, he shouldn’t use a screwdriver to split a wooden board when a chisel would do the job better and faster.

Related Articles

- New Techniques Help Compression Surpass Its Practical Limits

- Compression Reduces Memory Bottlenecks, From Supercomputing To Video

- New "Hidden" Compression Techniques Pack More Data On Your Devices

Similar advice applies to compression algorithms. Most computer users know enough about compression to use file utilities like WinZip and WinRAR to shrink large e-mail text attachments. These users also understand that Adobe Photoshop, Picasa, and other lossy image compressors can’t be used for spreadsheets and text. Similarly, they know that WinZip and other lossless compression algorithms don’t deliver much benefit on raw images (such as .tif files) and can’t further compress already compressed images, such as .jpg files.

Even though they aren’t the right tool for all jobs, what would happen if compressors intended for one job (a class of files) were applied to another job (a different class of files)? Let’s perform our own version of using a screwdriver as a chisel with some readily available compression tools.

This file type includes high resolution graphics and schematics when applicable.

The WKdm Binary Data Compressor

The latest version of Apple’s Mac OS X Mavericks has built-in “RAM doubler” functionality. “The new Compressed Memory feature sounds absolutely utopian: the operating system applies data compression to the least important content held in memory to free up more available RAM,” says Daniel Eran Dilger of Apple Insider. “And it does so with such efficiency that the CPU and storage devices work less, saving battery as well.”1

The WKdm compressor recognizes four 32-bit data patterns: all zeros, perfect matches found in a 16-entry dictionary, partial dictionary matches of at least 10 of 32 bits, and a new 32-bit pattern not currently stored in the dictionary. Since the WKdm compression algorithm is relatively simple and uses a very short dictionary, WKdm can be implemented in software at low MIPS, compressing at about 33 instructions per 32-bit word and decompressing at about 23 instructions per word.

In contrast, a Lempel-Ziv compressor (the ubiquitous compression engine in utilities like WinZip and 7-Zip) achieves higher compression ratios but at two or three times slower compression speed. We’ll use WKdm to represent the right tool for compressing real-time binary data.

The APAX Numerical Compressor

Samplify’s APAX (APplication AXceleration) algorithm was designed to compress numbers: integer and floating-point values.2 APAX users specify the datatype being compressed and the desired compression mode (lossless or lossy). For lossy compression, users also select the desired compression ratio or decompressed signal quality. APAX then delivers that user-requested result on numerical datasets.

The APAX algorithm combines an attenuator, a redundancy remover (a sophisticated derivative engine), and a nibble-oriented bit packer to deliver compression ratios between 2:1 and 10:1 for images, video reference frames, sensor data, and floating-point scientific datasets. APAX software is even faster than WKdm, compressing at about 20 instructions per sample and decompressing at about 15 instructions per sample. We’ll use APAX to represent the right tool for compressing real-time numerical datasets.

Comparing WKdm And APAX

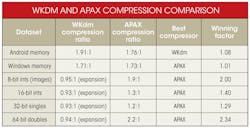

Recognizing that WKdm is the right tool for compressing text and binary computer files (data typically stored in DDR memory), while APAX is the right tool to compress integer and floating-point values, let’s apply both tools to a corpus containing both binary and numerical files. The table provides the results of using the tools on both kinds of data. The datasets used for this comparison (a total of about 1 Gbyte) include:

• An Android mobile phone memory dump

• A Windows laptop memory dump

• The Kodak image suite (8-bit integers)

• 16-bit integer sensor data

• 32-bit and 64-bit floating-point scientific datasets

This corpus is not intended to be exhaustive, but it does illustrate that WKdm’s performance degradation on numerical data (which WKdm was not designed to compress) is larger than APAX’s degradation on binary data (which APAX was not designed to compress). Overall, the APAX compressor achieved the best compression on five of our six datasets.

A Universal Encoder?

A universal encoder for all datatypes, the equivalent of “one tool for all jobs,” would be exceedingly useful (especially if it could be integrated as a hardware block into memory controllers). Yet our brief comparison of two real-time compression products supports four general observations.

First, compressors are developed and tuned to perform optimally on the statistics of certain datasets. Second, some compressors perform better than others on datasets for which they were not developed. Third, system considerations often whittle down the large universe of compressors to a rather short list that can meet demanding real-time constraints, including throughput and latency. As compression algorithms reach the point of diminishing returns, algorithm complexity (MIPS and silicon area) becomes a key compression metric.3

Finally, impending Big Data bottlenecks will require renewed efforts from statisticians and data scientists to develop new models for so-called “unstructured” data to effectively compress such data. Future compression algorithms will only perform well on unstructured data if its underlying regularity (to the degree that such regularity exists) can be adaptively discovered and effectively modeled.

References

1. “Compressed Memory in OS X 10.9 Mavericks aims to free RAM, extend battery life,” Apple Insider, Daniel Eran Dilger, http://appleinsider.com/articles/13/06/12/compressed-memory-in-os-x-109-mavericks-aims-to-free-ram-extend-battery-life

2. “Universal Numerical Encoder and Profiler Reduces Computing’s Memory Wall with Software, FPGA, and SoC Implementations,” Cornell University Library, Al Wegener, http://arxiv.org/abs/1303.4994

3. “As Compression Technologies Reach Their Limits, What’s Next?” Al Wegener, http://electronicdesign.com/embedded/compression-technologies-reach-their-limits-what-s-next

Al Wegener, CTO and founder of Samplify, www.samplify.com, earned an MSCS from Stanford University and a BSEE from Bucknell University.

About the Author

Al Wegener

Al Wegener is the CTO and founder of Samplify Systems, a fabless semiconductor startup in Santa Clara, Calif. He holds 17 patents and is named on additional Samplify patent applications. He earned a BSEE from Bucknell University and an MSCS from Stanford University.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: