Multi-Billion-Cycle Tests Require Comprehensive Debug

Debugging today’s advanced systems-on-chip (SoCs) is anything but simple. SoC verification environments require tests spanning billions of cycles (Fig. 1).

With this volume of tests, many design teams are turning to emulation systems for system-level hardware/software (HW/SW) co-verification. However, the limitations of the debug environments in most traditional emulators can frustrate SoC verification efforts. Traditional emulation systems with only logic-analyzer style debug tools have limited capture depth and are no longer sufficient to meet the needs of today’s design teams.

Related Articles

- Multicore SoCs Make Emulation Mandatory

- Transaction-based Verification And Emulation Combine For Multi-megahertz Verification Performance

- The Verification Flow Can Enable Horizontal Reuse

Solving this problem requires an emulation system that goes beyond providing traditional logic analyzer debug by combining deterministic rerun and debug of multi-billion-cycle system-level tests with full-signal visibility and simulation-like debug. Users need the speed and capacity of traditional emulation debug with the determinism of register transfer level (RTL) simulation in a full-signal visibility debug environment that spans billions of cycles.

Traditional Emulation Debug Vs. Simulation

Today, more than 80% of all bugs are found with the traditional simulation environments used by virtually every design team. The typical simulation use model involves simulating test cases and logging any failures with assertions and monitors. The verification team then reruns any failing tests to debug the failures using a standard debug environment such as Verdi3. Ideally, users include assertions, monitors, and protocol verification intellectual property (VIP) that extend directly to the emulation environment, increasing ease of use and avoiding introducing a different debug methodology.

This file type includes high resolution graphics and schematics when applicable.

Unfortunately, traditional emulation environments do not support this simulation-style debug, especially those using in-circuit emulation (ICE) where the verification environment is principally implemented in external physical hardware. Unlike simulation, traditional emulation environments are rarely deterministic. The timing and sequence of events can be difficult to reproduce.

So, users typically are forced to rely upon an emulation system’s integrated logic analyzer, where the user sets up watch points and trigger conditions and then hopes to capture all the information required to debug the failure in trace memory. While some emulation platforms do offer full visibility with their logic analyzers, this visibility is limited to the depth of the logic analyzer’s trace window. This is where the challenges arise.

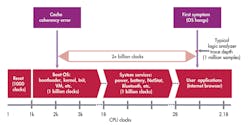

First, most logic analyzers used in emulation today (integrated or not) are limited to a trace depth of approximately 1 million cycles. Even the best available logic analyzers cannot span billions of cycles with full-signal visibility into the design. So while these logic analyzers are great for capturing early RTL bugs, such as reset errors that occur hundreds of cycles into a given test, they are frustratingly limited when tracing system-level HW/SW integration bugs that manifest themselves billions of cycles after the actual problem occurred. Because of the trace depth limitation, users find themselves constantly editing the trigger conditions and rerunning tests in an effort to try to capture events earlier and earlier. This brings us to another fundamental challenge: lack of determinism.

With traditional emulation systems, especially while running in in-circuit mode, virtually no determinism is available. Since events are somewhat random, failures can vary from run to run, thwarting engineering efforts to trace a specific problem. As a simple example, imagine booting a tablet computer using emulation where there’s a flaw in the CPU’s cache coherency logic. In one case, the bug corrupts the executable for the Internet browser early during the boot cycle. Several billion cycles later, the browser is launched and the program crashes.

Since the trace buffer was only a few million cycles deep, the engineer cannot see the original cause of the browser crash and is forced to change the trigger conditions, rerun the test, and try to capture the bug earlier in the test. Unfortunately, because of the randomness of events, something else in the cache is corrupted on the second run. As a result, a different program crashes, and the trigger conditions are no longer valid.

In addition, when users are ready to move from a traditional emulation system into a simulation debug environment, capturing emulation waveforms can be a long and difficult process. Potentially millions of cycles of full visibility debug data must be transferred from emulation trace memory to a PC, where it is then processed to generate waveforms. On many emulation platforms, the engineer is forced to wait an hour or more for this processing to complete before any waveforms can be viewed.

Using A Simulation Debug Methodology With Emulation

To address these challenges, verification teams require a methodology that every engineer has used in simulation, while offering the capacity and speed of a full emulation environment. These simulation debug-enabled environments must have three key qualities:

• Support for a fully featured system-level verification environment, including transaction-level verification

• Billion-cycle capacity and fully deterministic rerun of tests

• Integration with a simulation-like debug environment with fast time-to-waveform capabilities

New generations of emulators, such as Synopsys’ ZeBu Server, offer simulation-like debug with full signal visibility with deterministic rerun and debug of multi-billion-cycle system-level test sequences. With integration to the popular Verdi3 debug environment, users can quickly analyze waveforms, perform transaction-level debug, and access the same powerful debug environment they are familiar with their simulation tools.

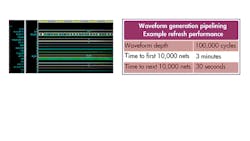

With ZeBu, the verification engineer kicks off a test and lets it run until a failure occurs, capturing trace data along the way. Once a failure occurs, the user can quickly view waveforms linked to design source code with Verdi3. At the same time, ZeBu has already captured all the data required to generate 100% full visibility data, no matter how many billions of cycles have transpired. Using the ZeBu interactive Combinatorial Signal Access (iCSA) technology, users can see waveforms using Verdi3 in minutes, rather than the hours typical of traditional emulation waveform generators (Fig. 2).

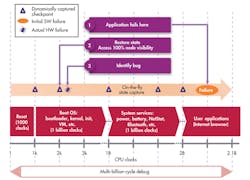

ZeBu’s powerful Post Run Debug mode enables the user to rerun any test deterministically, eliminating seemingly random error chasing (Fig. 3). Instead of spending days or weeks to recreate the same scenario, engineers can rerun any test knowing they will always see the exact same results. This is particularly effective when performing debug on an operating system, where a memory location may have been corrupted early in the boot sequence, and the error isn’t realized until billions of cycles later, making it difficult to debug using only a million-cycle window.

Conclusion

Achieving comprehensive, full-chip, hardware/software debug for SoCs can be extremely frustrating using either traditional simulation or emulation environments on their own. But with the deterministic rerun and debug of multi-billion-cycle system-level test sequences, full signal visibility and Verdi3 integration for simulation-like debug, advanced emulation systems like Synopsys’ ZeBu offer SoC verification teams a method for achieving comprehensive debug of SoCs with multiple billion-cycle test cases.

About the Author

Tom Borgstrom

Director of Marketing

Tom Borgstrom is a director of marketing at Synopsys, where he is responsible for the ZeBu emulation solution. Before assuming his current role, he was director of strategic program management in Synopsys’ Verification Group. Prior to joining Synopsys, he held a variety of senior sales, marketing, and applications roles at TransEDA, Exemplar Logic, and CrossCheck Technology and worked as an ASIC design engineer at Matsushita. He received bachelor’s and master’s degrees in electrical engineering from the Ohio State University.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: