FAQ: Considering and Building Edge AI Implementations

What you’ll learn:

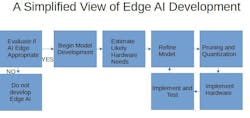

Demand for AI continues to grow at the edge of the enterprise. By gathering data at the edge and using AI at the edge to interpret and act on that data, better and faster results are possible. But building edge AI capability isn’t without its challenges, especially when looking at generative AI. Many aspects must be considered (see figure).

The following frequently asked questions (FAQ) address some of the basics and provide a starting point for embarking on your edge AI effort.

How do I know if edge AI is right for my use case?

Not every edge application is, or should be given, an AI capability. Start by defining scenarios where real-time decision-making and low latency are crucial. Examples of such scenarios include industrial predictive maintenance and autonomous-vehicle navigation.

Consider the extent to which AI is likely to improve results and then consider whether the cost and complexity involved make the investment worthwhile. For example, an edge AI application focused on a relatively simple machine or piece of equipment may benefit from edge monitoring that simply flags a particular frequency of vibration known to be the signature bearing failure indicator for this device—and it may be almost the only problem known to occur in this system. This use case may not warrant development of edge AI.

On the other hand, a more complex system, subject to varying loads and with multiple or more complex failure patterns, could benefit from edge AI. Not only could it better discern potential failure, but it can also provide diagnostic information in real-time and growing “knowledge” over time.

How can modeling help in implementing edge AI?

In edge AI development, "modeling" is defined as the process of creating and designing the underlying algorithms that power AI in an edge deployment. It gives edge devices the ability to analyze their own local data and provide real-time decision-making with little or no dependence on cloud access or input from a traditional data center. This helps ensure rapid response times while minimizing data transmission requirements.

>>Check out this TechXchange for similar articles and videos

Ultimately, the model becomes the core of the edge AI implementation. Numerous models are available that can be adopted and adapted, meaning development from scratch is usually not necessary.

How can one ensure that the model can work within hardware constraints?

Because edge devices generally have limited processing power, one of the functions of the modeling process is to optimize the model size and complexity to fit within the hardware constraints, through methods such as quantization or pruning. Quantization is a well-established method for essentially simplifying data (think “rounding”) so that calculations can go faster and use fewer resources.

However, quantization can introduce errors into algorithms, such as underflow, and computational noise. The risks associated with quantization error need to be understood so that the model provides useful results accurate enough for the given application. Similarly, pruning refers to the removal of select parameters that don’t contribute to the desired results, with the goal being to maintain accuracy while increasing efficiency.

To be useful at the edge, the model also must be prepared to deliver real-time inferencing. Training a model can test its inferencing ability and usually is accomplished by applying large datasets, preferably from the exact environment the model is attempting to serve. This process can potentially be continued even after the edge device is set up and operational.

How important is hardware selection for edge AI?

Though software and data are the distinctive aspect of edge AI, nothing happens without the right hardware. And while hardware in edge applications is usually not very exotic, its careful selection and implementation are very important and challenging. The physical environment of the edge may dictate the size and form factor of the hardware. It may need to be ruggedized to tolerate temperature extremes, moisture, or vibration, and it must perform within whatever power limitations exist at the edge location.

As in all edge deployments, there’s a balancing act between cost, power availability, processing needs, and, of course, concerns about latency and processing speed. This is necessarily an iterative process: scoping out needs, narrowing hardware options, and developing a model.

Edge devices must have sufficient processing power and memory to run your AI model, otherwise edge AI will fall short. But devices also need to able to fit within power consumption and size limits. While considering power consumption and size constraints, pruning and quantization can help minimize hardware requirements. Architecture choices are also important. For instance, neural-network architectures exist that are optimized for low-power edge computing.

How important are security considerations for edge AI?

Increasingly, the edge is vulnerable to sophisticated cyber threats. Therefore, security should be considered throughout development, into deployment, and beyond. Access controls and permissions are an obvious place to start, but this may need to be aligned with enterprise-wide security practices. As important, but potentially independent of broader policies, encryption can be implemented within edge activities. It provides strong enhancement to security, though it adds some computing overhead.

Next Steps

This collection of FAQs is high level and simplified. But many tools and options are available to make edge AI deployment management worthwhile. Some of these links can help you get to next steps:

>>Check out this TechXchange for similar articles and videos

About the Author

Alan Earls

Contributing Editor

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: